Everyone’s talking about how cloud-hosted services can quicken user productivity. But, as more software becomes hosted online, how should those services guarantee fast access for everyone?

Minute-by-minute shuffling of hosted services between the best-performing cloud hosts around the world is the best strategy, internet performance monitoring firm Cedexis‘ CEO and co-founder Marty Kagan told attendees at our Structure 2012 conference on Wednesday.

“Single platforms are dangerous”

Kagan showed Cedexis data explaining how a single cloud host like Google App Engine can exhibit perfectly acceptable cloud latency on a regular basis – until a sudden spike from a region in which it doesn’t excel crushes load times for many users around the world.

“Clearly the lesson is, if you are going to deploy in a cloud, you need to not rely on a single provider to give you high availability,” Kagan said at Structure 2012.

“Multi-cloud strategies are key to delivering a global audience”

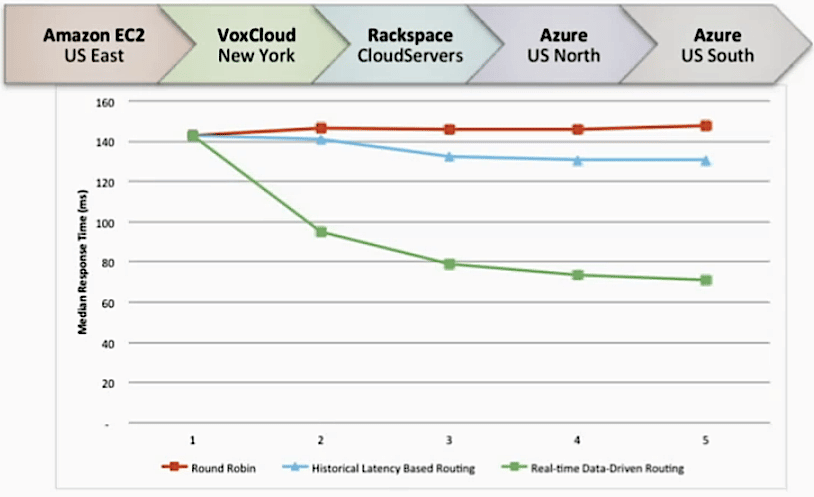

But Kagan also presented further data showing how distributed cloud hosting can actually hurt load times if services pick their hosts without full knowledge of hosts’ current performance strengths in regions where a user stampede might occur at any moment.

“If you randomly round-robin around traffic all across those, you see the latency actually gets worse,” he explained.

“The fresher the data is, the better performance you’re going to see for your visitors”

“What if, instead of historical data, you had access to real-time telemetry, and you made individual decisions throughout the day based on what you’re currently seeing in the network?,” Kagan asked.

“The improvements are significant – more than 50 percent (latency) gains – and those improvements continue even as you get out to five or six platforms.”

He concluded: “In short, if you are going to deploy in a hybrid- or multi-cloud environment, you want to locate using real-time telemetry rather than static policies.”

Check out the rest of our Structure 2012 coverage, as well as the live stream, here.